David Beamonte

on 11 March 2026

The bare metal problem in AI Factories

Operational challenges in modern AI infrastructure

As AI platforms grow in scale, many of the limiting factors are no longer related to model design or algorithmic performance, but to the operation of the underlying infrastructure. GPU accelerators are key components and are responsible for a large part of the total system cost, which makes their continuous availability and stable operation critical to the output and efficiency of the entire AI platform. However, hardware failures, driver issues, and configuration problems happen periodically, bringing down the usable capacity.

These challenges become more visible in large, continuously operating environments, such as AI Factories, where infrastructure is expected to run at high utilization over long periods of time. In such deployments, even short disruptions at the hardware level translate directly into lost throughput and reduced return on investment. As a result, the reliability and manageability of the physical infrastructure have become central concerns in the design and operation of modern AI systems. In this article, we’ll explore why bare metal automation has become so critical for AI infrastructure, and highlight how Canonical MAAS can be a solution for AI Factories.

AI Factories: cloud-native software, bare metal hardware

AI Factories are large-scale AI environments designed to operate continuously, converting data and compute capacity into models, predictions, or generated content. They are typically built to maximize throughput from expensive accelerator hardware and to support a steady flow of training and inference workloads. The term is relatively new, and it describes a class of deployments where efficiency, repeatability, and sustained utilization are primary design goals.

From an architectural perspective, AI Factories are cloud-native at the software layer. Workloads are commonly containerized and orchestrated using Kubernetes, which provides scheduling, isolation, and automation for AI jobs. At the same time, these environments often rely on direct access to physical servers to meet performance requirements. Virtualization is frequently avoided in order to preserve low latency, full GPU access, and high-speed networking. The result is a layered architecture in which cloud-native software depends on a bare metal foundation that must be stable, consistent, and efficiently managed.

The role of bare metal automation in AI infrastructure

In these GPU-dense AI environments, hardware and low-level software issues are not exceptional events but expected operational conditions. Hardware failures, driver incompatibilities, kernel-level errors, and configuration drift occur regularly at scale and result in unusable machines, usually for extended periods of time. If recovery and reprovisioning depend on manual intervention, these failures lead directly to long downtime and reduced hardware capacity – so this is where bare metal automation comes in.

Bare metal automation refers to the automated provisioning, configuration, and lifecycle management of physical servers. This includes operating system deployment, configuration, and hardware validation. It enables infrastructure teams to keep a consistent and healthy inventory, reprovision nodes easily, and recover from hardware or system-level failures with minimal manual intervention. With bare metal automation, organizations can treat physical servers as flexible resources, limiting the operational impact of hardware faults and maintaining a higher level of effective capacity across the AI platform.

Canonical MAAS as a foundation for AI Factories operations

Bare metal as cloud-like resources

Canonical MAAS provides a way to manage bare metal infrastructure with the same expectations of automation and elasticity commonly associated with cloud platforms. It turns physical servers into programmable resources that can be provisioned, reconfigured, and redeployed through automated workflows, rather than manual processes. The support for Infrastructure as Code tools, such as Terraform provides a complete integration with the rest of the AI infrastructure.

This flexibility is particularly well suited to AI environments, where hardware must be brought online quickly, recovered reliably after failures, and reassigned as workload requirements change.

Predictable lifecycle management

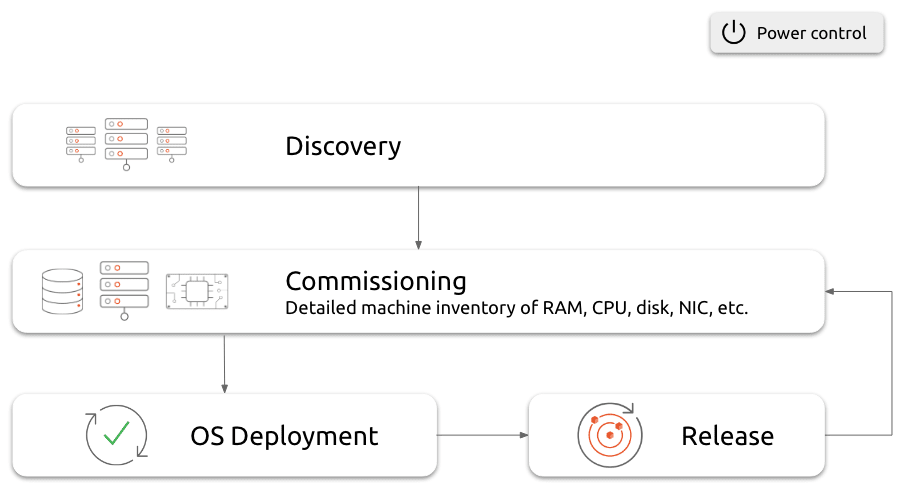

The nodes are constantly accessible from MAAS through the board management controller (BMC), which provides an out-of-band channel that enables MAAS to control the power and the full lifecycle of the nodes:

- Discovery: New machines are discovered and added to the inventory

- Commissioning: Detect the hardware capabilities of the machines (CPU, memory, network interfaces, disks, etc.)

- Deployment: Deploy the operating system using PXE boot on the machine

- Repurposing: Release the machine so that it can be reused

MAAS communicates with the nodes through the board management controller (BMC), which manages the full lifecycle of the machines, enabling automated provisioning, recovery, and recycling of servers, and allowing failed or misconfigured nodes to be returned to service with minimal delay.

A critical aspect of this model is hardware validation and health management. MAAS supports hardware testing as part of the process, allowing systems to be verified before they are placed into production. This reduces the risk of introducing faulty components into active AI clusters. In addition, regular testing and hardware health monitoring help detect degradation or latent failures over time, enabling proactive maintenance rather than reactive recovery.

All these capabilities help ensure that the physical layer remains stable and predictable.

The foundation for bare metal Kubernetes

As a bare metal cloud provider, MAAS can serve as a physical cloud below Kubernetes. It complements workload orchestration by ensuring that the underlying hardware remains available and consistent. MAAS is fully integrated with Juju. This combination provides full system orchestration, enabling coordinated management of the physical machines and workloads.

Infrastructure operations and return on investment

There are many factors that impact the efficiency of an AI infrastructure. Scheduling and workload management influence performance, but hardware availability is mainly driven by infrastructure operations. So the overall output of an AI platform depends on the stable availability of hardware and perfect coordination with the workload management. Slow provisioning, inconsistent configurations, and long recovery processes reduce effective capacity and increase the cost per unit of AI output.

By automating bare metal provisioning and lifecycle management, infrastructure teams can reduce downtime, improve consistency, and keep more accelerators in productive use. In this way, improvements in infrastructure operations translate directly into higher utilization and better return on investment.

For organizations that operate large AI platforms, including AI Factories, bare metal automation is a key factor in the economic performance of the system. Kubernetes provides workload orchestration, but automated bare metal management determines how reliably AI infrastructure can deliver value.

Further reading and next steps

In order to explore these topics in more detail, the following resources provide additional background and practical guidance:

- An overview of how Canonical MAAS enables automated provisioning, hardware testing, and lifecycle management for bare metal infrastructure.

- Guidance on operating Kubernetes-based platforms on bare metal using MAAS and Juju for full-stack infrastructure and platform orchestration.

- The comprehensive guide to enterprise AI infrastructure with open source.

- Take your first steps with MAAS, learning how easy it is to build a fully virtualized sandbox on your laptop in just 30 minutes using Multipass.

Or reach out to our team of experts if you want to learn more about how to get full MAAS support.